Chapter 7 Generalized Linear Models

7.1 Problem Setup

In the Linear Models Chapter 6, we assumed the generative process to be linear in the effects of the predictors \(x\). We now write that same linear model, slightly differently: \[ y|x \sim \mathcal{N}(x'\beta, \sigma^2). \]

This model not allow for the non-linear relations of Example 7.1, nor does it allow for the distribution of \(\varepsilon\) to change with \(x\), as in Example 7.2. Generalize linear models (GLM), as the name suggests, are a generalization of the linear models in Chapter 6 that allow that14.

For Example 7.1, we would like something in the lines of \[ y|x \sim Binom(1,p(x)) \]

For Example 7.2, we would like something in the lines of \[ y|x \sim \mathcal{N}(x'\beta,\sigma^2(x)), \] or more generally \[ y|x \sim \mathcal{N}(\mu(x),\sigma^2(x)), \] or maybe not Gaussian \[ y|x \sim Pois(\lambda(x)). \]

Even more generally, for some distribution \(F(\theta)\), with a parameter \(\theta\), we would like to assume that the data is generated via \[\begin{align} \tag{7.1} y|x \sim F(\theta(x)) \end{align}\]

Possible examples include \[\begin{align} y|x &\sim Poisson(\lambda(x)) \\ y|x &\sim Exp(\lambda(x)) \\ y|x &\sim \mathcal{N}(\mu(x),\sigma^2(x)) \end{align}\]

GLMs allow models of the type of Eq.(7.1), while imposing some constraints on \(F\) and on the relation \(\theta(x)\). GLMs assume the data distribution \(F\) to be in a “well-behaved” family known as the Natural Exponential Family of distributions. This family includes the Gaussian, Gamma, Binomial, Poisson, and Negative Binomial distributions. These five include as special cases the exponential, chi-squared, Rayleigh, Weibull, Bernoulli, and geometric distributions.

GLMs also assume that the distribution’s parameter, \(\theta\), is some simple function of a linear combination of the effects. In our cigarettes example this amounts to assuming that each cigarette has an additive effect, but not on the probability of cancer, but rather, on some simple function of it. Formally \[g(\theta(x))=x'\beta,\] and we recall that \[x'\beta=\beta_0 + \sum_j x_j \beta_j.\] The function \(g\) is called the link function, its inverse, \(g^{-1}\) is the mean function. We thus say that “the effects of each cigarette is linear in link scale”. This terminology will later be required to understand R’s output.

7.2 Logistic Regression

The best known of the GLM class of models is the logistic regression that deals with Binomial, or more precisely, Bernoulli-distributed data. The link function in the logistic regression is the logit function \[\begin{align} g(t)=log\left( \frac{t}{(1-t)} \right) \tag{7.2} \end{align}\] implying that under the logistic model assumptions \[\begin{align} y|x \sim Binom \left( 1, p=\frac{e^{x'\beta}}{1+e^{x'\beta}} \right). \tag{7.3} \end{align}\]

Before we fit such a model, we try to justify this construction, in particular, the enigmatic link function in Eq.(7.2). Let’s look at the simplest possible case: the comparison of two groups indexed by \(x\): \(x=0\) for the first, and \(x=1\) for the second. We start with some definitions.

Odds are the same as probabilities, but instead of telling me there is a \(66\%\) of success, they tell me the odds of success are “2 to 1”. If you ever placed a bet, the language of “odds” should not be unfamiliar to you.

Odds ratios (OR) compare between the probabilities of two groups, only that it does not compare them in probability scale, but rather in odds scale. You can also think of ORs as a measure of distance between two Brenoulli distributions. ORs have better mathematical properties than other candidate distance measures, such as \(P(y_1=1)-P(y_2=1)\).

Under the logit link assumption formalized in Eq.(7.3), the OR between two conditions indexed by \(y|x=1\) and \(y|x=0\), returns: \[\begin{align} OR(y|x=1,y|x=0) = \frac{P(y=1|x=1)/P(y=0|x=1)}{P(y=1|x=0)/P(y=0|x=0)} = e^{\beta_1}. \end{align}\]

The last equality demystifies the choice of the link function in the logistic regression: it allows us to interpret \(\beta\) of the logistic regression as a measure of change of binary random variables, namely, as the (log) odds-ratios due to a unit increase in \(x\).

7.2.1 Logistic Regression with R

Let’s get us some data.

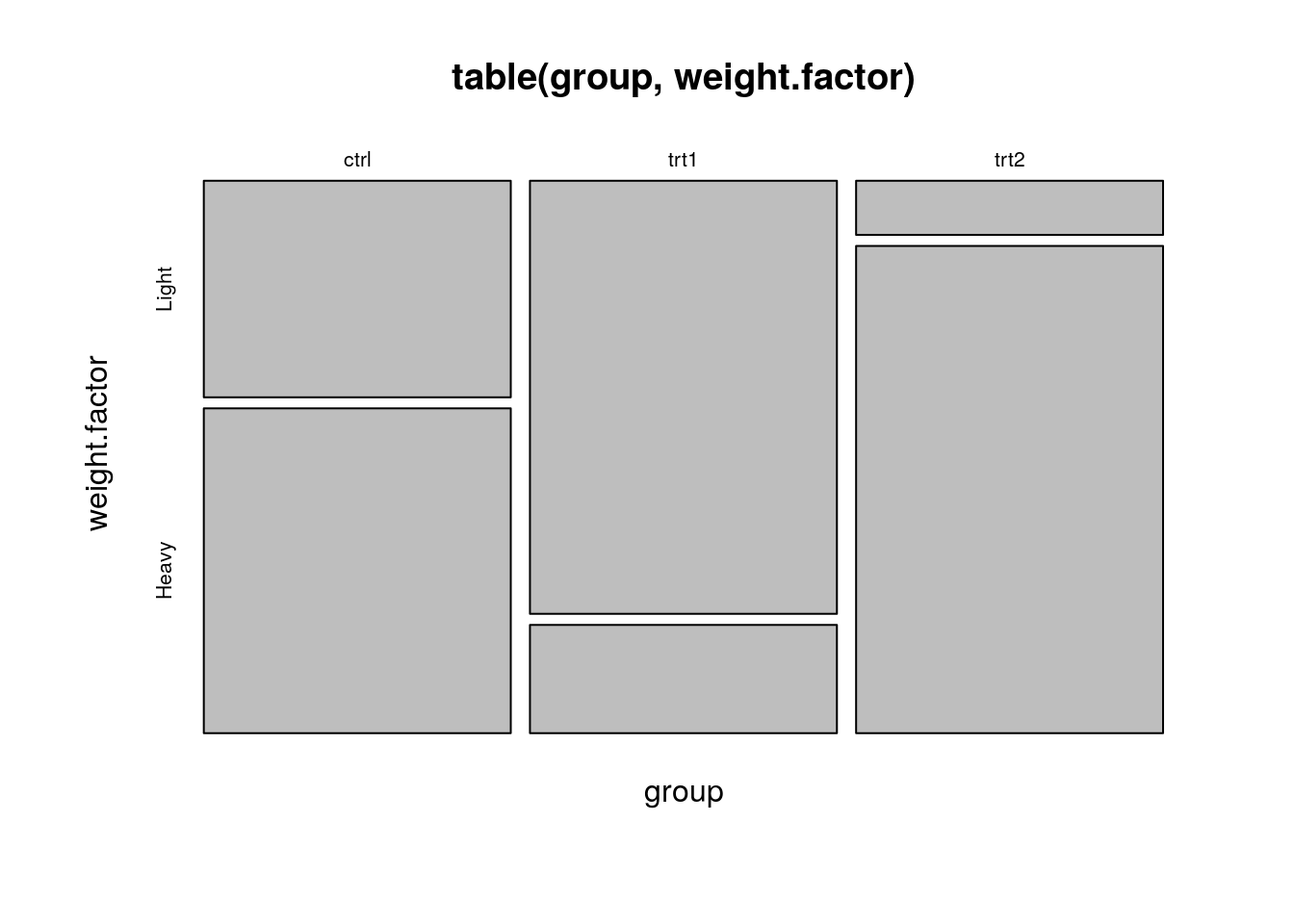

The PlantGrowth data records the weight of plants under three conditions: control, treatment1, and treatment2.

head(PlantGrowth)## weight group

## 1 4.17 ctrl

## 2 5.58 ctrl

## 3 5.18 ctrl

## 4 6.11 ctrl

## 5 4.50 ctrl

## 6 4.61 ctrlWe will now attach the data so that its contents is available in the workspace (don’t forget to detach afterwards, or you can expect some conflicting object names).

We will also use the cut function to create a binary response variable for Light, and Heavy plants (we are doing logistic regression, so we need a two-class response), notice also that cut splits according to range and not to length.

As a general rule of thumb, when we discretize continuous variables, we lose information.

For pedagogical reasons, however, we will proceed with this bad practice.

Look at the following output and think: how many group effects do we expect? What should be the sign of each effect?

attach(PlantGrowth)

weight.factor<- cut(weight, 2, labels=c('Light', 'Heavy')) # binarize weights

plot(table(group, weight.factor))

Let’s fit a logistic regression, and inspect the output.

glm.1<- glm(weight.factor~group, family=binomial)

summary(glm.1)##

## Call:

## glm(formula = weight.factor ~ group, family = binomial)

##

## Deviance Residuals:

## Min 1Q Median 3Q Max

## -2.1460 -0.6681 0.4590 0.8728 1.7941

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 0.4055 0.6455 0.628 0.5299

## grouptrt1 -1.7918 1.0206 -1.756 0.0792 .

## grouptrt2 1.7918 1.2360 1.450 0.1471

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 41.054 on 29 degrees of freedom

## Residual deviance: 29.970 on 27 degrees of freedom

## AIC: 35.97

##

## Number of Fisher Scoring iterations: 4Things to note:

- The

glmfunction is our workhorse for all GLM models. - The

familyargument ofglmtells R the respose variable is brenoulli, thus, performing a logistic regression. - The

summaryfunction is content aware. It gives a different output forglmclass objects than for other objects, such as thelmwe saw in Chapter 6. In fact, whatsummarydoes is merely callsummary.glm. - As usual, we get the coefficients table, but recall that they are to be interpreted as (log) odd-ratios, i.e., in “link scale”. To return to probabilities (“response scale”), we will need to undo the logistic transformation.

- As usual, we get the significance for the test of no-effect, versus a two-sided alternative. P-values are asymptotic, thus, only approximate (and can be very bad approximations in small samples).

- The residuals of

glmare slightly different than thelmresiduals, and called Deviance Residuals. - For help see

?glm,?family, and?summary.glm.

Like in the linear models, we can use an ANOVA table to check if treatments have any effect, and not one treatment at a time. In the case of GLMs, this is called an analysis of deviance table.

anova(glm.1, test='LRT')## Analysis of Deviance Table

##

## Model: binomial, link: logit

##

## Response: weight.factor

##

## Terms added sequentially (first to last)

##

##

## Df Deviance Resid. Df Resid. Dev Pr(>Chi)

## NULL 29 41.054

## group 2 11.084 27 29.970 0.003919 **

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Things to note:

- The

anovafunction, like thesummaryfunction, are content-aware and produce a different output for theglmclass than for thelmclass. All thatanovadoes is callanova.glm. - In GLMs there is no canonical test (like the F test for

lm).LRTimplies we want an approximate Likelihood Ratio Test. We thus specify the type of test desired with thetestargument. - The distribution of the weights of the plants does vary with the treatment given, as we may see from the significance of the

groupfactor. - Readers familiar with ANOVA tables, should know that we computed the GLM equivalent of a type I sum- of-squares.

Run

drop1(glm.1, test='Chisq')for a GLM equivalent of a type III sum-of-squares. - For help see

?anova.glm.

Let’s predict the probability of a heavy plant for each treatment.

predict(glm.1, type='response')## 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

## 0.6 0.6 0.6 0.6 0.6 0.6 0.6 0.6 0.6 0.6 0.2 0.2 0.2 0.2 0.2 0.2 0.2 0.2

## 19 20 21 22 23 24 25 26 27 28 29 30

## 0.2 0.2 0.9 0.9 0.9 0.9 0.9 0.9 0.9 0.9 0.9 0.9Things to note:

- Like the

summaryandanovafunctions, thepredictfunction is aware that its input is ofglmclass. All thatpredictdoes is callpredict.glm. - In GLMs there are many types of predictions. The

typeargument controls which type is returned. Usetype=responsefor predictions in probability scale; use `type=link’ for predictions in log-odds scale. - How do I know we are predicting the probability of a heavy plant, and not a light plant? Just run

contrasts(weight.factor)to see which of the categories of the factorweight.factoris encoded as 1, and which as 0. - For help see

?predict.glm.

Let’s detach the data so it is no longer in our workspace, and object names do not collide.

detach(PlantGrowth)We gave an example with a factorial (i.e. discrete) predictor. We can do the same with multiple continuous predictors.

data('Pima.te', package='MASS') # Loads data

head(Pima.te)## npreg glu bp skin bmi ped age type

## 1 6 148 72 35 33.6 0.627 50 Yes

## 2 1 85 66 29 26.6 0.351 31 No

## 3 1 89 66 23 28.1 0.167 21 No

## 4 3 78 50 32 31.0 0.248 26 Yes

## 5 2 197 70 45 30.5 0.158 53 Yes

## 6 5 166 72 19 25.8 0.587 51 Yesglm.2<- step(glm(type~., data=Pima.te, family=binomial(link='probit')))## Start: AIC=302.41

## type ~ npreg + glu + bp + skin + bmi + ped + age

##

## Df Deviance AIC

## - bp 1 286.92 300.92

## - skin 1 286.94 300.94

## - age 1 287.74 301.74

## <none> 286.41 302.41

## - ped 1 291.06 305.06

## - npreg 1 292.55 306.55

## - bmi 1 294.52 308.52

## - glu 1 342.35 356.35

##

## Step: AIC=300.92

## type ~ npreg + glu + skin + bmi + ped + age

##

## Df Deviance AIC

## - skin 1 287.50 299.50

## - age 1 287.92 299.92

## <none> 286.92 300.92

## - ped 1 291.70 303.70

## - npreg 1 293.06 305.06

## - bmi 1 294.55 306.55

## - glu 1 342.41 354.41

##

## Step: AIC=299.5

## type ~ npreg + glu + bmi + ped + age

##

## Df Deviance AIC

## - age 1 288.47 298.47

## <none> 287.50 299.50

## - ped 1 292.41 302.41

## - npreg 1 294.21 304.21

## - bmi 1 304.37 314.37

## - glu 1 343.48 353.48

##

## Step: AIC=298.47

## type ~ npreg + glu + bmi + ped

##

## Df Deviance AIC

## <none> 288.47 298.47

## - ped 1 293.78 301.78

## - bmi 1 305.17 313.17

## - npreg 1 305.49 313.49

## - glu 1 349.25 357.25summary(glm.2)##

## Call:

## glm(formula = type ~ npreg + glu + bmi + ped, family = binomial(link = "probit"),

## data = Pima.te)

##

## Deviance Residuals:

## Min 1Q Median 3Q Max

## -2.9935 -0.6487 -0.3585 0.6326 2.5791

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) -5.445143 0.569373 -9.563 < 2e-16 ***

## npreg 0.102410 0.025607 3.999 6.35e-05 ***

## glu 0.021739 0.002988 7.276 3.45e-13 ***

## bmi 0.048709 0.012291 3.963 7.40e-05 ***

## ped 0.534366 0.250584 2.132 0.033 *

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 420.30 on 331 degrees of freedom

## Residual deviance: 288.47 on 327 degrees of freedom

## AIC: 298.47

##

## Number of Fisher Scoring iterations: 5Things to note:

- We used the

~.syntax to tell R to fit a model with all the available predictors. - Since we want to focus on significant predictors, we used the

stepfunction to perform a step-wise regression, i.e. sequentially remove non-significant predictors. The function reports each model it has checked, and the variable it has decided to remove at each step. - The output of

stepis a single model, with the subset of selected predictors.

7.3 Poisson Regression

Poisson regression means we fit a model assuming \(y|x \sim Poisson(\lambda(x))\). Put differently, we assume that for each treatment, encoded as a combinations of predictors \(x\), the response is Poisson distributed with a rate that depends on the predictors.

The typical link function for Poisson regression is the logarithm: \(g(t)=log(t)\). This means that we assume \(y|x \sim Poisson(\lambda(x) = e^{x'\beta})\). Why is this a good choice? We again resort to the two-group case, encoded by \(x=1\) and \(x=0\), to understand this model: \(\lambda(x=1)=e^{\beta_0+\beta_1}=e^{\beta_0} \; e^{\beta_1}= \lambda(x=0) \; e^{\beta_1}\). We thus see that this link function implies that a change in \(x\) multiples the rate of events by \(e^{\beta_1}\).

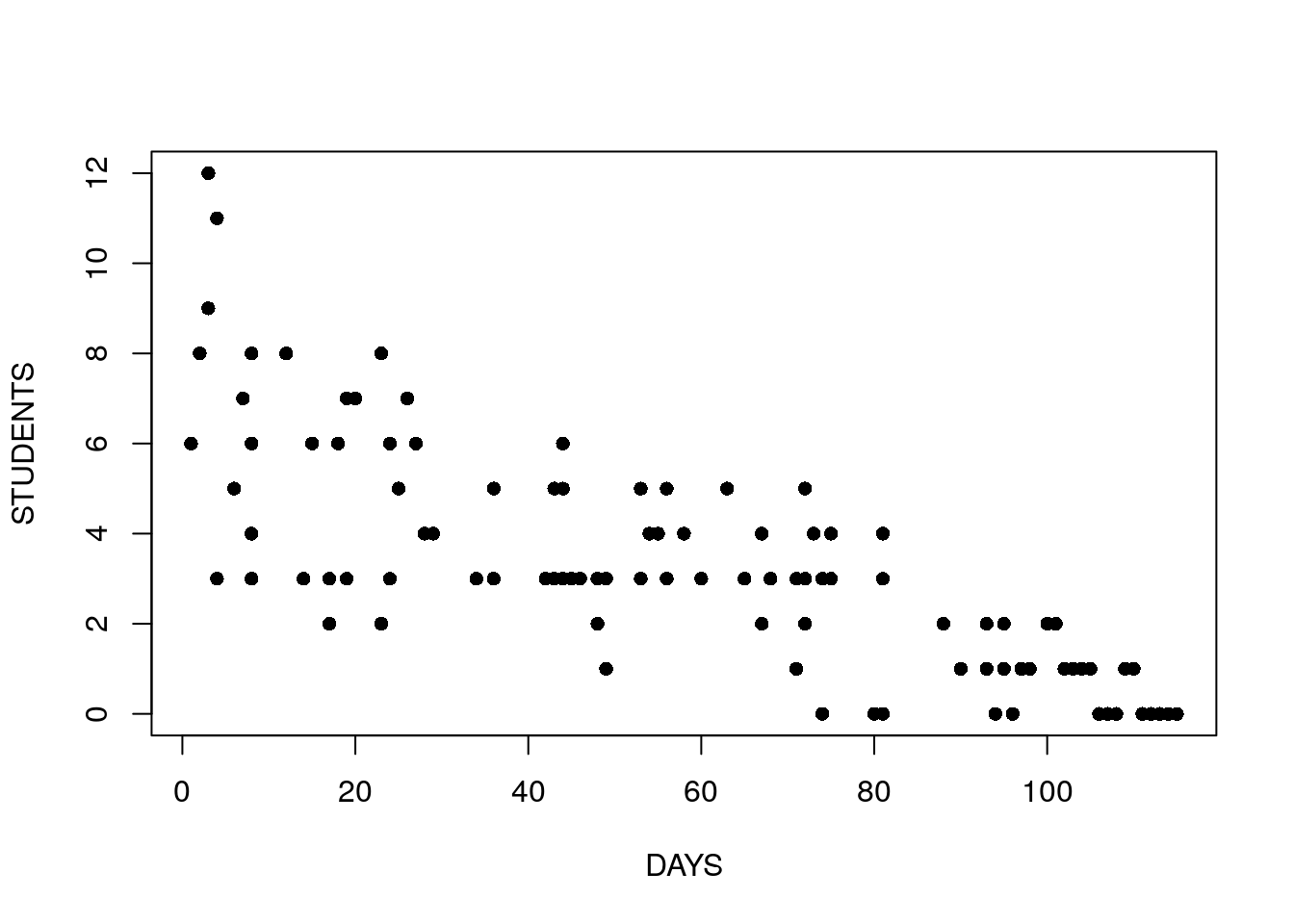

For our example15 we inspect the number of infected high-school kids, as a function of the days since an outbreak.

cases <-

structure(list(Days = c(1L, 2L, 3L, 3L, 4L, 4L, 4L, 6L, 7L, 8L,

8L, 8L, 8L, 12L, 14L, 15L, 17L, 17L, 17L, 18L, 19L, 19L, 20L,

23L, 23L, 23L, 24L, 24L, 25L, 26L, 27L, 28L, 29L, 34L, 36L, 36L,

42L, 42L, 43L, 43L, 44L, 44L, 44L, 44L, 45L, 46L, 48L, 48L, 49L,

49L, 53L, 53L, 53L, 54L, 55L, 56L, 56L, 58L, 60L, 63L, 65L, 67L,

67L, 68L, 71L, 71L, 72L, 72L, 72L, 73L, 74L, 74L, 74L, 75L, 75L,

80L, 81L, 81L, 81L, 81L, 88L, 88L, 90L, 93L, 93L, 94L, 95L, 95L,

95L, 96L, 96L, 97L, 98L, 100L, 101L, 102L, 103L, 104L, 105L,

106L, 107L, 108L, 109L, 110L, 111L, 112L, 113L, 114L, 115L),

Students = c(6L, 8L, 12L, 9L, 3L, 3L, 11L, 5L, 7L, 3L, 8L,

4L, 6L, 8L, 3L, 6L, 3L, 2L, 2L, 6L, 3L, 7L, 7L, 2L, 2L, 8L,

3L, 6L, 5L, 7L, 6L, 4L, 4L, 3L, 3L, 5L, 3L, 3L, 3L, 5L, 3L,

5L, 6L, 3L, 3L, 3L, 3L, 2L, 3L, 1L, 3L, 3L, 5L, 4L, 4L, 3L,

5L, 4L, 3L, 5L, 3L, 4L, 2L, 3L, 3L, 1L, 3L, 2L, 5L, 4L, 3L,

0L, 3L, 3L, 4L, 0L, 3L, 3L, 4L, 0L, 2L, 2L, 1L, 1L, 2L, 0L,

2L, 1L, 1L, 0L, 0L, 1L, 1L, 2L, 2L, 1L, 1L, 1L, 1L, 0L, 0L,

0L, 1L, 1L, 0L, 0L, 0L, 0L, 0L)), .Names = c("Days", "Students"

), class = "data.frame", row.names = c(NA, -109L))

attach(cases)

head(cases) ## Days Students

## 1 1 6

## 2 2 8

## 3 3 12

## 4 3 9

## 5 4 3

## 6 4 3Look at the following plot and think:

- Can we assume that the errors have constant variance?

- What is the sign of the effect of time on the number of sick students?

- Can we assume a linear effect of time?

plot(Days, Students, xlab = "DAYS", ylab = "STUDENTS", pch = 16)

We now fit a model to check for the change in the rate of events as a function of the days since the outbreak.

glm.3 <- glm(Students ~ Days, family = poisson)

summary(glm.3)##

## Call:

## glm(formula = Students ~ Days, family = poisson)

##

## Deviance Residuals:

## Min 1Q Median 3Q Max

## -2.00482 -0.85719 -0.09331 0.63969 1.73696

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) 1.990235 0.083935 23.71 <2e-16 ***

## Days -0.017463 0.001727 -10.11 <2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for poisson family taken to be 1)

##

## Null deviance: 215.36 on 108 degrees of freedom

## Residual deviance: 101.17 on 107 degrees of freedom

## AIC: 393.11

##

## Number of Fisher Scoring iterations: 5Things to note:

- We used

family=poissonin theglmfunction to tell R that we assume a Poisson distribution. - The coefficients table is there as usual. When interpreting the table, we need to recall that the effect, i.e. the \(\hat \beta\), are multiplicative due to the assumed link function.

- Each day decreases the rate of events by a factor of about \(e^{\beta_1}=\) 0.98.

- For more information see

?glmand?family.

7.4 Extensions

As we already implied, GLMs are a very wide class of models.

We do not need to use the default link function,but more importantly, we are not constrained to Binomial, or Poisson distributed response.

For exponential, gamma, and other response distributions, see ?glm or the references in the Bibliographic Notes section.

7.5 Bibliographic Notes

The ultimate reference on GLMs is McCullagh (1984). For a less technical exposition, we refer to the usual Venables and Ripley (2013).

7.6 Practice Yourself

Try using

lmfor analyzing the plant growth data inweight.factoras a function ofgroupin thePlantGrowthdata.- Generate some synthetic data for a logistic regression:

- Generate two predictor variables of length \(100\). They can be random from your favorite distribution.

- Fix

beta<- c(-1,2), and generate the response with:rbinom(n=100,size=1,prob=exp(x %*% beta)/(1+exp(x %*% beta))). Think: why is this the model implied by the logistic regression? - Fit a Logistic regression to your synthetic data using

glm. - Are the estimated coefficients similar to the true ones you used?

- What is the estimated probability of an event at

x=1,1? Usepredict.glmbut make sure to read the documentation on thetypeargument.

- Read about the

epildataset using? MASS::epil. Inspect the dependency of the number of seizures (\(y\)) in the age of the patient (age) and the treatment (trt).- Fit a Poisson regression with

glmandfamily = "poisson". - Are the coefficients significant?

- Does the treatment reduce the frequency of the seizures?

- According to this model, what would be the number of seizures for 20 years old patient with progabide treatment?

- Fit a Poisson regression with

See DataCamp’s Generalized Linear Models in R for more self practice.

References

McCullagh, Peter. 1984. “Generalized Linear Models.” European Journal of Operational Research 16 (3). Elsevier: 285–92.

Venables, William N, and Brian D Ripley. 2013. Modern Applied Statistics with S-Plus. Springer Science & Business Media.

Do not confuse generalized linear models with non-linear regression, or generalized least squares. These are different things, that we do not discuss.↩

Taken from http://www.theanalysisfactor.com/generalized-linear-models-in-r-part-6-poisson-regression-count-variables/↩